Originally published 4 Nov 2015 on the Bitcoin StackExchange. This post has not been reviewed by any Bitcoin experts and so likely contains errors.

Satoshi Nakamoto’s [Bitcoin design paper][nakamoto 08 whitepaper] describes blockchain pruning (section 7), so why haven’t we implemented it yet? This seems especially important given recent scalability concerns.

Unfortunately, Nakamoto-style blockchain pruning is not safe with current blocks and transactions, although future improvements may allow us to prune some space from future blocks and transactions.

Let’s think through the idea of deleting a transaction for a moment. Let’s say we have two particular transaction in the blockchain:

- Alice mines 50 bitcoins

- Alice pays Bob 50 bitcoins

Now we delete Alice’s coinbase (mining) transaction:

- [PURGED]

- Alice pays Bob 50 bitcoins

Wow! That looks like 50 bitcoins appeared out of nowhere!

What if that transaction has lots of proof of work?

Could you trust the Alice-pays-Bob transaction if it had 50,000 confirmations even though you can’t see where those bitcoins came from? Sure, that sounds like that might be a reasonable trade off of reduced security for increased convenience.

But in order to validate future transactions, nodes don’t just need to know who has bitcoins—they also need to know who doesn’t have bitcoins. For example, imagine Bob hasn’t spent his 50 bitcoins, so that transaction should be part of the blockchain; yet, when you download the blockchain from Mallory’s node, this is what you get:

- [PURGED]

- [PURGED]

At some point in the future, Bob goes to spend his 50 bitcoins. Other nodes who didn’t sync from Mallory know Bob has 50 bitcoins, so they accept blocks with that transaction. Your node rejects blocks with that transaction because it doesn’t think Bob has 50 bitcoins, permanently forking you off of the consensus blockchain. This is called consensus failure and it’s one of the things we try really hard to avoid in Bitcoin.

Why not just keep track of amounts and purge the rest?

One way around this dilemma would be if we could split transactions into different parts:

-

The parts we needed to build the Unspent Transaction Output (UTXO) set that full nodes use to prove whether or not Bob has 50 bitcoins.

-

Everything else, such as the large public keys and signatures we use to prove Alice was authorized to spend those 50 bitcoins.

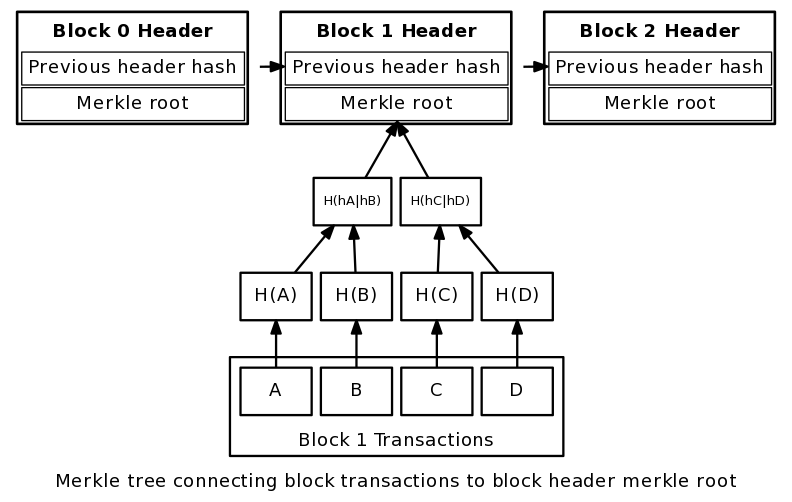

Here we encounter an additional problem with transaction pruning, a limitation of the way Nakamoto designed Transaction Identifiers (txids). Nakamoto’s design simply hashes the entire transaction and uses that to form the leaf nodes of the merkle tree whose root node gets included in the block header and secured with proof of work.

(Image from 21.co, CC-By-SA license)

Because of this design, in order to verify the UTXO-required data, you also need to download the entire transaction, including its public keys and signatures which constitute about 1/3 to 2/3 of a transaction.

This makes Nakamoto’s whitepaper-style pruning in Bitcoin right now pretty much impossible. Happily, some smart people have been working on improving the situation.

Segregated Witness

…which sounds like some weird law-enforcement term…

Blockstream’s testnet sidechain, Elements Alpha, includes a nifty feature called segregated witness. First, some background on witnesses in cryptography:

A witness is the data needed to prove some other data’s validity. This probably derives from contract law, where you often get other people to witness your signature on important documents. In the case of Bitcoin, the witness to a particular spend being valid is the scriptPubKey of the output being spent and scriptSig of the input that references it in the spending transaction.

In Elements Alpha and the new Liquid mainnet Sidechain, the witness is “segregated” into a different part of the block and is not covered by the txid hash. That means that nodes who want to reduce their download requirements can skip downloading witnesses for transactions with more than (say) 50,000 confirmations. However, now they can still use the block header merkle root to verify that all of the non-witness data (including the amounts transferred) are correct, and use that to build a correct UTXO set.

I can’t find a source right now, but I think I’ve heard Wuille and Maxwell say that for typical Bitcoin mainnet transactions, this would mean about 1/3 of the transaction data doesn’t have to be downloaded by nodes that don’t want to validate old signatures. On Elements Alpha where the witness is larger for transactions that use value blinding, this would mean 2/3 of the data doesn’t have to be downloaded.

As a bonus, segregated witness also “completely eliminates all known forms of transaction malleability” (Wuille’s description).

When will this appear in Bitcoin?

You’re in luck: just a few days ago (21 Oct 2015), Luke Dashjr presented an idea for how segregated witness can be soft forked onto Bitcoin mainnet. (Previously, it had been thought that a hard fork would be necessary.)

The payer pays to a scriptPubKey that always returns true but which contains the hash of a redeem script like P2SH does:

<hash_of_redeem_script> OP_TRUE

(I just made that up; I don’t know what the real construction will look like.)

When the person paid later goes to spend, they provide an empty scriptSig in the transaction itself. This is valid to old nodes, but upgraded nodes know to look somewhere else in the block for the actual scriptSig. The witness has been segregated.

For both old nodes and new nodes, they calculate the txid using the empty scriptSig, so the data covered by the merkle root still covers the important value amounts and references.

I don’t know exactly how well this compares to the segregated witness in Elements Alpha (does that cover the scriptPubKey as well?), but it does most of the work in a convenient soft forkable construction.

Since this is week-old engineering, it may change a lot or even be found to be unworkable in the coming months, so don’t expect to see it in the immediate future—but, long term, do expect to start seeing some new pre-download pruning options appear.

{% include links.md %} {% include references.md %}